- ����ǰ�����ѧϰ�������Ͽ���С��ţ����дһЩС������һ���ˣ��ڴ�֮ǰ������������

- ��ȷ��Ҫ��hadoop��ʲô

- ����ѧϰһ��mapreduce

- ubuntu������������hadoop�ᱨ�����쳣������

- ��

- ����������̽�����ݡ��ھ�����

- map=���飬reduce=�ϲ�

- ����������tmp�ļ��У�Ĭ��namenode����������Ҫ��core-site.xml�ļ������ӣ������˴����ļ��У�ûȨ�Ļ�����Ҫ��root��������Ȩ�ij�777����

<property> <name>hadoop.tmp.dir</name> <value>/usr/local/hadoop/tmp</value></property>

- �����ݣ��ҵĵ�һ��Ӧ�����й�ϵ�����ݿ��е�������ô��hadoop���ʹ�ã���������һЩ���ϣ�ʹ�õ���DBInputFormat���Ǿͼ�дһ�������ݿ��ȡ���ݣ�Ȼ�������������ļ���С���Ӱ�

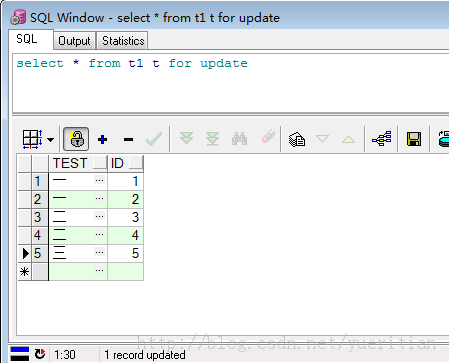

- ���ݿ�Ū�ļ�һ��ɣ�id����值���͡�test���ַ����ͣ�����ܼ�ͳ��TEST�ֶγ��ֵ�����

- ���ݶ�ȡ�ࣺ

import java.io.DataInput;import java.io.DataOutput;import java.io.IOException;import java.sql.PreparedStatement;import java.sql.ResultSet;import java.sql.SQLException;import org.apache.hadoop.io.Writable;import org.apache.hadoop.mapreduce.lib.db.DBWritable;public class DBRecoder implements Writable, DBWritable{ String test; int id; @Override public void write(DataOutput out) throws IOException { out.writeUTF(test); out.writeInt(id); } @Override public void readFields(DataInput in) throws IOException { test = in.readUTF(); id = in.readInt(); } @Override public void readFields(ResultSet arg0) throws SQLException { test = arg0.getString("test"); id = arg0.getInt("id"); } @Override public void write(PreparedStatement arg0) throws SQLException { arg0.setString(1, test); arg0.setInt(2, id); }}

- mapreduce������

import java.io.IOException;import org.apache.hadoop.conf.Configuration;import org.apache.hadoop.fs.Path;import org.apache.hadoop.io.IntWritable;import org.apache.hadoop.io.LongWritable;import org.apache.hadoop.io.Text;import org.apache.hadoop.mapreduce.Job;import org.apache.hadoop.mapreduce.Mapper;import org.apache.hadoop.mapreduce.Reducer;import org.apache.hadoop.mapreduce.lib.db.DBConfiguration;import org.apache.hadoop.mapreduce.lib.db.DBInputFormat;import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import org.apache.hadoop.util.GenericOptionsParser;public class DataCountTest { public static class TokenizerMapper extends Mapper<LongWritable, DBRecoder, Text, IntWritable> { public void map(LongWritable key, DBRecoder value, Context context) throws IOException, InterruptedException { context.write(new Text(value.test), new IntWritable(1)); } } public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> { private IntWritable result = new IntWritable(); public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException { int sum = 0; for (IntWritable val : values) { sum += val.get(); } result.set(sum); context.write(key, result); } } public static void main(String[] args) throws Exception { args = new String[1]; args[0] = "hdfs://192.168.203.137:9000/user/chenph/output1111221"; Configuration conf = new Configuration(); DBConfiguration.configureDB(conf, "oracle.jdbc.driver.OracleDriver", "jdbc:oracle:thin:@192.168.101.179:1521:orcl", "chenph", "chenph"); String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs(); Job job = new Job(conf, "DB count"); job.setJarByClass(DataCountTest.class); job.setMapperClass(TokenizerMapper.class); job.setReducerClass(IntSumReducer.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(IntWritable.class); String[] fields1 = { "id", "test"}; DBInputFormat.setInput(job, DBRecoder.class, "t1", null, "id", fields1); FileOutputFormat.setOutputPath(job, new Path(otherArgs[0])); System.exit(job.waitForCompletion(true) ? 0 : 1); }}--------------------------------------------------------------------------------------------------�������������������⣺

- Job�����Ϊ�����ϣ���Ӧ����ʲô�һ�û�в鵽

- �������⣬hadoopĬ����utf8格ʽ�ģ������ȡ����gbk����Ҫ���д���

- ������������ͦ�ٵģ���Ҳ���ϰ�ģ��°������û�У�����ȫ��ƴ�ճ����ģ��ܶ�ط��������˽⣬��Ҫ��һ��ѧϰ�ٷ�����

- ��������ʱ��������˵������������ַ�ʽ����ʵ�ʵĴ��������⣬ԭ����Dz������ߣ���˲����ɱ�����ݿ⣬һ�㶼����õ����ı��ļ�����ʽ