前言

线程的组织形式对程序的性能影响是至关重要的,本篇博文主要以下面一种情况来介绍线程组织形式:

- 2D grid 2D block

线程索引

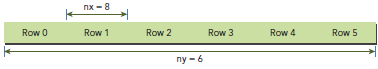

一般,一个矩阵以线性存储在global memory中的,并以行来实现线性:

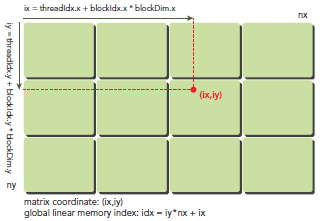

在kernel里,线程的唯一索引非常有用,为了确定一个线程的索引,我们以2D为例:

- 线程和block索引

- 矩阵中元素坐标

- 线性global memory 的偏移

首先可以将thread和block索引映射到矩阵坐标:

ix = threadIdx.x + blockIdx.x * blockDim.x

iy = threadIdx.y + blockIdx.y * blockDim.y

之后可以利用上述变量计算线性地址:

idx = iy * nx + ix

上图展示了block和thread索引,矩阵坐标以及线性地址之间的关系。

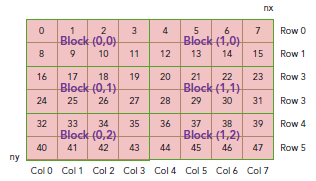

现在可以验证出下面的关系:

thread_id(2,1)block_id(1,0) coordinate(6,1) global index 14 ival 14

下图显示了三者之间的关系:

代码

int main(int argc, char **argv) { printf("%s Starting...\n", argv[0]); // set up device int dev = 0; cudaDeviceProp deviceProp; CHECK(cudaGetDeviceProperties(&deviceProp, dev)); printf("Using Device %d: %s\n", dev, deviceProp.name); CHECK(cudaSetDevice(dev));

// set up date size of matrix int nx = 1<<14; int ny = 1<<14; int nxy = nx*ny; int nBytes = nxy * sizeof(float); printf("Matrix size: nx %d ny %d\n",nx, ny);

// malloc host memory float *h_A, *h_B, *hostRef, *gpuRef; h_A = (float *)malloc(nBytes); h_B = (float *)malloc(nBytes); hostRef = (float *)malloc(nBytes); gpuRef = (float *)malloc(nBytes);

// initialize data at host side double iStart = cpuSecond(); initialData (h_A, nxy); initialData (h_B, nxy); double iElaps = cpuSecond() - iStart; memset(hostRef, 0, nBytes); memset(gpuRef, 0, nBytes);

// add matrix at host side for result checks iStart = cpuSecond(); sumMatrixOnHost (h_A, h_B, hostRef, nx,ny); iElaps = cpuSecond() - iStart;

// malloc device global memory float *d_MatA, *d_MatB, *d_MatC; cudaMalloc((void **)&d_MatA, nBytes); cudaMalloc((void **)&d_MatB, nBytes); cudaMalloc((void **)&d_MatC, nBytes);

// transfer data from host to device cudaMemcpy(d_MatA, h_A, nBytes, cudaMemcpyHostToDevice); cudaMemcpy(d_MatB, h_B, nBytes, cudaMemcpyHostToDevice);

// invoke kernel at host side int dimx = 32; int dimy = 32; dim3 block(dimx, dimy); dim3 grid((nx+block.x-1)/block.x, (ny+block.y-1)/block.y); iStart = cpuSecond(); sumMatrixOnGPU2D <<< grid, block >>>(d_MatA, d_MatB, d_MatC, nx, ny); cudaDeviceSynchronize(); iElaps = cpuSecond() - iStart; printf("sumMatrixOnGPU2D <<<(%d,%d), (%d,%d)>>> elapsed %f sec\n", grid.x, grid.y, block.x, block.y, iElaps);

// copy kernel result back to host side cudaMemcpy(gpuRef, d_MatC, nBytes, cudaMemcpyDeviceToHost);

// check device results checkResult(hostRef, gpuRef, nxy);

// free device global memory cudaFree(d_MatA); cudaFree(d_MatB); cudaFree(d_MatC);

// free host memory free(h_A); free(h_B); free(hostRef); free(gpuRef);

// reset device cudaDeviceReset(); return (0);}

编译运行:

$ nvcc -arch=sm_20 sumMatrixOnGPU-2D-grid-2D-block.cu -o matrix2D$ ./matrix2D输出:

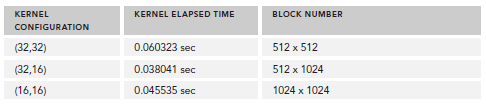

./a.out Starting...Using Device 0: Tesla M2070Matrix size: nx 16384 ny 16384sumMatrixOnGPU2D <<<(512,512), (32,32)>>> elapsed 0.060323 secArrays match.

接下来,我们更改block配置为32x16,重新编译,输出为:

sumMatrixOnGPU2D <<<(512,1024), (32,16)>>> elapsed 0.038041 sec

可以看到,性能提升了一倍,直观的来看,我们会认为第二个配置比第一个多了一倍的block所以性能提升一倍,实际上也确实是因为block增加了。但是,如果你继续增加block的数量,则性能又会降低:

sumMatrixOnGPU2D <<< (1024,1024), (16,16) >>> elapsed 0.045535 sec

下图展示了不同配置的性能;

关于性能的分析将在之后的博文中总结,现在只是了解下,本文在于掌握线程组织的方法。

代码下载:CodeSamples.zip